You already know how a normal touchscreen behaves: tap, swipe, pinch, repeat. It works, but it is still flat, hard, and limited. Artificial skin changes the idea completely. Instead of treating touch as a simple command, this kind of membrane can respond to pressure, bending, stretching, and surface deformation. That means your future devices could understand not only where you touched them, but how you touched them.

Why This Breakthrough Matters

The research points toward a future where interactive devices feel less like rigid electronics and more like responsive surfaces. Picture a phone case that senses your grip, a wearable that comfortably bends with your body, or a robot hand that can tell the difference between holding a steel tool and touching human skin.

That is a huge shift. Today, most devices wait for clean digital commands. Artificial skin gives machines a richer physical sense of the world. When you press harder, slide slowly, squeeze gently, or tap quickly, the device can read those differences as meaningful information.

What Artificial Electronic Skin Actually Is

Artificial electronic skin, often called e-skin, is a flexible sensing surface built to imitate some of the jobs handled by human skin. Your skin can detect pressure, vibration, heat, texture, and pain. An electronic version does not need to copy every biological detail, but it does need to capture physical interaction in a smarter way than a normal touchscreen.

A skin-like interface can include flexible polymers, soft membranes, conductive traces, pressure sensors, stretch sensors, and tiny electronic pathways. When you touch or deform the surface, those components create measurable electrical changes. The system then turns those changes into data a computer can understand.

The outer layer bends, stretches, and compresses so the device can react more naturally to your hand.

Pressure and deformation sensors detect how the surface is being touched, pressed, or pulled.

Thin electronic pathways carry touch signals without needing a rigid circuit board at every point.

Algorithms help translate raw touch changes into useful commands, gestures, or feedback.

How It Connects to Human Skin

Your skin is packed with specialized receptors that help your brain understand the physical world. You can feel a light tap, a tight grip, a rough surface, or a vibration because your nervous system constantly processes touch information.

Artificial skin borrows from that idea. Instead of nerve endings, it uses sensors. Instead of biological signals, it uses electrical readings. Instead of your brain interpreting touch, software can analyze the patterns.

This is why the technology is so interesting. It does not just make a device softer. It gives the device a better sense of physical context. A future phone, robot, or wearable could react differently depending on whether you tap, press, squeeze, stroke, or hold it.

How the Interface Could Work

When you press into a flexible artificial skin membrane, the surface changes shape. That deformation can alter resistance, capacitance, or another measurable electrical property inside the material. The device reads those changes and maps them into a touch pattern.

That pattern can tell the system several things at once: where your finger is, how hard you are pressing, how long you press, whether the surface is being stretched, and whether your touch is moving across the membrane.

Here is the simple version:

- You touch the flexible surface.

- The membrane bends or compresses.

- Sensors detect the physical change.

- Electronics convert that change into data.

- Software turns the data into an action, response, or feedback effect.

Add AI to that pipeline and the system becomes more powerful. Instead of only responding to fixed gestures, the device could learn patterns. It could recognize how you normally hold a phone, how you press when selecting something carefully, or how your grip changes during movement.

What This Could Mean for Your Phone

A future phone with artificial skin would not have to stay trapped behind a sheet of glass. Its surface could become a physical interface. Buttons might appear as raised areas. Game controls could feel different from camera controls. Accessibility features could use pressure and texture instead of only visual menus.

You might squeeze the side of a device to launch a tool, press deeper to confirm an action, or slide across a soft surface that gives tactile feedback under your finger. That makes interaction faster, more natural, and potentially easier for people who struggle with tiny on-screen controls.

The next generation of devices may not simply respond to your touch. They may understand the physical meaning behind it.

Why Robotics Needs Artificial Skin

Robots are powerful, but many of them are still clumsy when touch matters. A robot can see an object with cameras, but vision alone does not tell it how slippery, fragile, soft, or delicate that object is. That is where tactile sensing becomes critical.

Artificial skin could help robotic hands feel contact across their fingers and palms. A robot could learn how much force is safe, when an object is slipping, or whether it is touching a person instead of a tool. That matters in hospitals, homes, factories, labs, and elder-care robotics.

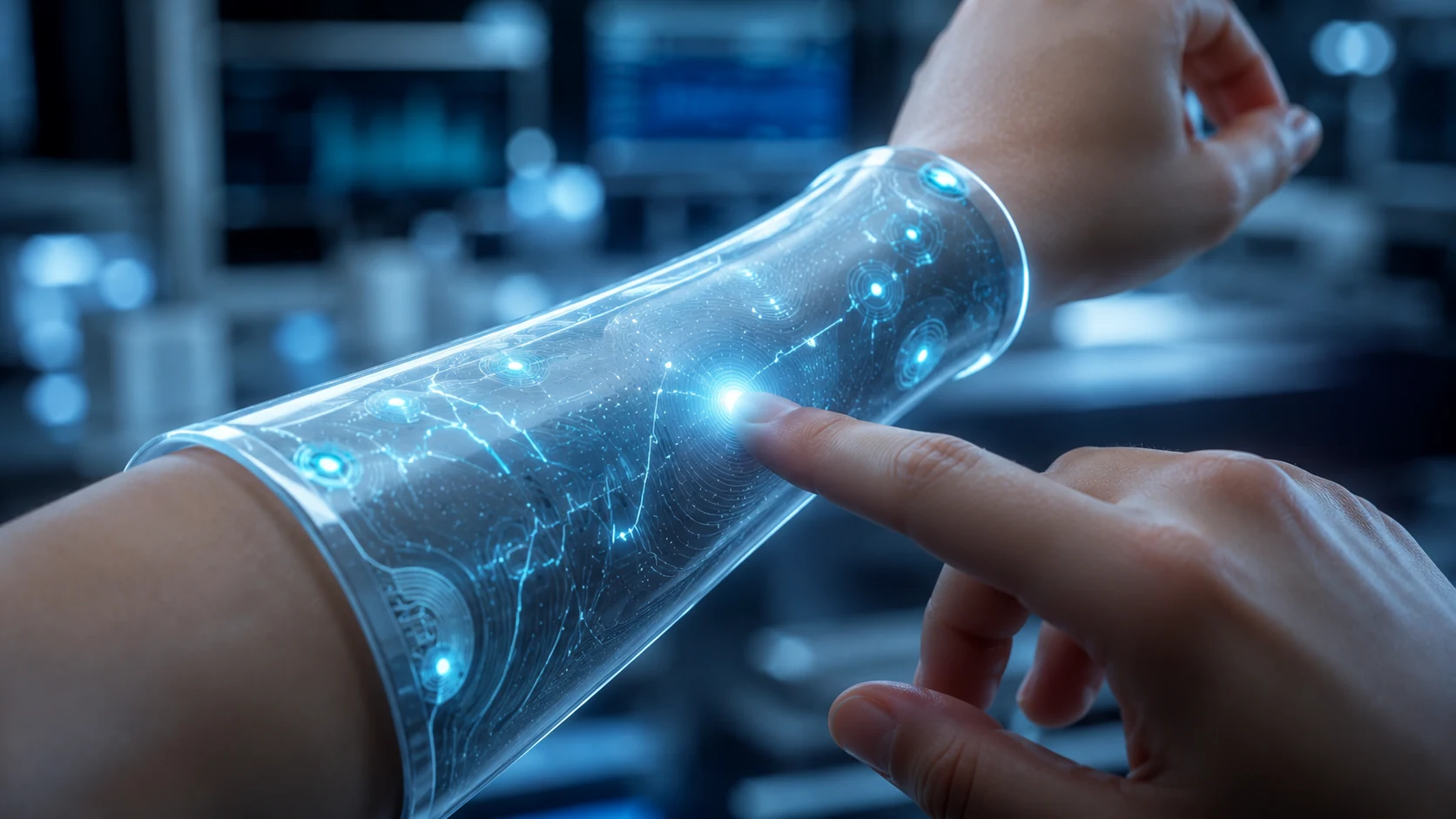

Wearables Could Become More Comfortable and Useful

Wearables are useful, but many still feel like gadgets strapped onto the body. Artificial skin points toward devices that bend with you. Instead of a bulky tracker, you could wear a thin flexible patch that monitors movement, pressure, temperature, or health signals.

That could help with physical therapy, sports training, elder care, prosthetics, and long-term health monitoring. A soft skin-like wearable could collect better data because it stays closer to the body and moves naturally with the user.

Where AI Fits Into the Picture

Raw touch data is messy. A flexible membrane can produce large amounts of changing information every time you move your hand. AI can help sort that data into meaningful patterns.

That means future systems may learn the difference between a nervous grip, a normal grip, a deliberate command, or accidental contact. Used responsibly, this could make devices more adaptive. Used carelessly, it could also raise privacy questions, because touch behavior may reveal more about you than you expect.

AI could recognize touch patterns that are too subtle for normal screens.

Interfaces could change based on pressure, grip, and hand movement.

Robots could improve grip and safety through tactile feedback.

Touch-based controls could help users who need alternatives to visual menus.

The Hard Problems Still Ahead

This technology is exciting, but it is not magic. To become common in consumer devices, artificial skin has to survive real-world abuse. Phones get dropped, bent, scratched, heated, chilled, and exposed to moisture. Wearables deal with sweat, movement, and constant friction.

Engineers still need to solve durability, manufacturing cost, waterproofing, power efficiency, repairability, and long-term sensor reliability. The software side also matters. A device that collects touch data must process it quickly without draining the battery or creating privacy problems.

- Can the membrane survive years of daily use?

- Can it stay accurate after bending thousands of times?

- Can it be manufactured cheaply enough for consumer devices?

- Can it be repaired or replaced without throwing away the whole product?

- Can touch data be protected from misuse?

Video Learning Section

These videos open inside a WolfieWeb lightbox player. The reader stays on your page while the video plays in a popup.

Electronic Skin: Learn how soft, flexible sensors can turn touch into useful digital information.

Flexible E-Skin: See how stretchable electronics could shape future wearables and health sensors.

Robotic Touch: Electronic skin could help robots grip safely and understand physical contact.

Final Takeaway

Artificial skin is not just a cooler touchscreen. It is a step toward machines that understand physical interaction in a more human way. Your phone could become more responsive. Your wearable could become more comfortable. Robots could become safer. Prosthetics could become more natural.

The big idea is simple: when devices learn to feel touch better, they can serve you better. That is why this research matters.